sources

- bad science

- diary of a teenage atheist

- new humanist blog

- pharyngula

- richard dawkins foundation

- sam harris

- skepchick

- richard wiseman

Powered by Perlanet

This morning, I was surprised by a comment on this YouTube video, in which I pointed out the fallacies of a creationist, Rob Carter. That video starts with me summarizing my relevant background as a developmental biologist. This commenter then makes this scurrilous accusation!

Rob Carter is correct, and PZ Myers, as an old-fashioned population geneticist, is wrong. Don’t you understand that environmental conditions and factors affect the organisms’ epigenomes? DNA is just a passive information data repository and its reading is completely controlled and regulated by epigenetic mechanisms and factors.

First of all, did this guy even listen to the video before he rushed in with his knee-jerk defense of Rob Carter?

Secondly, I am not a population geneticist. I am a professor at a small liberal arts college, which means I have to be a jack-of-all-trades within my discipline — I can teach population genetics at the undergraduate level, but I would never claim to be a pop gen guy. That’s the domain of people like Dan Cardinale and Zach Hancock on YouTube, and they could tie me in knots with their expertise. They definitely shred Rob Carter, who doesn’t even understand it as well as I do.

I am primarily a developmental biologist. That’s my focus and my interest, although in recent years I’ve been expanding that focus into eco-evo-devo…I’ve taught courses in that. My research is all about looking at the development of local spiders, to identify what factors in each species development shapes their adaptation to a particular niche, and how we can have so many different species of spiders co-existing in my backyard. To claim that I don’t understand the multiple factors that affect development is ludicrous. Rob Carter is just droning out buzzwords with little comprehension, and to someone who actually knows the subject he is discussing, he comes off as a fool.

Just a reminder: 15 years ago, in Dublin, Ireland, I was confronted by a group of Muslim apologists who tried to bamboozle me with claims about Mohammed’s revelations about development. They asked (at the 7 minute mark), Are you an embryologist?

, to which I said “Yes,” and set them aback a bit.

I’ve always said I am a developmental biologist. My commenter was trying to make a peculiar ad hominem, suggesting that I was wrong because I’m only an old-fashioned population geneticist

, and then rattling off a bunch of concepts that are actually the meat-and-potatoes of developmental biology.

Also, that DNA is just a passive information data repository

nonsense is a strategem used by creationists to deny the significance of changes to the genome in evolution.

Masters of the Universe is playing at the Morris Theatre right now, and I was lured in. It’s terrible. It’s two hours of pointless reiteration of an intellectual property that was contrived in the 1980s as a tool to sell toys — it had a poorly animated cartoon show, a glorified advertisement, that played every afternoon in that sweet spot when kids were getting home from school. It was repetitive noise. Every episode had roughly the same structure: a squad of freakishly weird characters, led by a bad guy with a skull for a face, would try to take over a castle guarded by a squad of mostly human, muscle-bound leaders, and be inevitably defeated. The same characters fought each other over and over again, and each one was for sale at Toys’R’Us as an action figure. Mattel cleaned up. Every 8-12 year old boy wanted a set of action figures they could play with as they watched the cartoon, and they would bring them to the playground to battle with their friends’ toys.

Masters of the Universe is playing at the Morris Theatre right now, and I was lured in. It’s terrible. It’s two hours of pointless reiteration of an intellectual property that was contrived in the 1980s as a tool to sell toys — it had a poorly animated cartoon show, a glorified advertisement, that played every afternoon in that sweet spot when kids were getting home from school. It was repetitive noise. Every episode had roughly the same structure: a squad of freakishly weird characters, led by a bad guy with a skull for a face, would try to take over a castle guarded by a squad of mostly human, muscle-bound leaders, and be inevitably defeated. The same characters fought each other over and over again, and each one was for sale at Toys’R’Us as an action figure. Mattel cleaned up. Every 8-12 year old boy wanted a set of action figures they could play with as they watched the cartoon, and they would bring them to the playground to battle with their friends’ toys.

I know because my kids grew up in the 1980s, and we had to buy all the toys. On their demands, we had He-Man and Beast Man and Moss Man and Man-At-Arms and Skeletor and Orko and others, and we also had the Castle Grayskull play set and various vehicles. This was also the time in my career when we were frequently moving to various places around the country, and one of the sadder things about that was frequently packing up everything we owned into a truck and driving to a different state, a different apartment. One of my memories was the final step in moving out, and that was going through the rooms and sweeping up the detritus and throwing it into one last box. It was always an assortment of He-Man figures and accessories that I had to rescue lest the kids yell at me.

So I had to go see this movie. It was my mental equivalent of tidying up the garbage in the corners of my brain.

It is a competently made movie. It’s got some good actors, Idris Elba and Alison Brie, and some new (to me) players, who did a good job, although I wish all of them were acting in good movies. I normally detest Jared Leto, but in this movie he’s unrecognizable behind a skull face and a comically affected accent, which is the only way to see Leto in anything. The plot is familiar: Skeletor and his weird pack of freaks take over the world of Eternia, He-Man shows up with a magic sword and beats everyone up (there is a lot more killing of bit players in the movie than in the old TV series), and the status quo is restored. Ho hum.

I kept wondering why this movie was made. It wasn’t for Art, because it’s entirely derivative and lacking in novelty. It wasn’t to tell a story that would resonate with viewers, because it could have been a cheap 20 minute cartoon rather than an expensive 2 hour movie. It wasn’t to provide moral instruction, although it did include an appearance by Orko at the end to briefly summarize the lesson taught by the show, just like the old cartoon. I don’t even recall what the message was, it was so perfunctory and so irrelevant to the movie I’d just watched. No, this was clearly the product of a thought by a marketing executive at Mattel. Let’s take another pass at the wallets of the 1980s generation that we successfully bilked 40 years ago! It’s a naked attempt to milk nostalgia.

They got me. I contributed to their $54 million box office on a movie that cost $200 million to make. Be smarter than me and don’t fall for it. The movie is not good enough to outweigh the bad faith premise behind its creation.

While many speakers focus entirely on the content of a talk, I put lots of effort into my slides. In fact, my ‘first’ slide is actually three slides that have saved me from many a technical disaster.

Here’s a typical first slide from my deck:

I use a black background and a 4:3 aspect ratio.

While many speakers use the modern 16:9 widescreen format, I stick with 4:3. Why? Because many projector systems are still designed for 4:3. If you project a 16:9 slide onto a 4:3 screen, it can get cut off or letterboxed. But if you project a 4:3 slide onto a 16:9 screen, it puts black bars on the sides—which blend into my black background.

I also put a green frame around the edge of this initial slide. Before the audience enters, I look at the screen. If I can see the entire green frame, I know my slides aren’t being cut off by the projector.

The public never get to see that slide. It is just for me to test the system.

Tip One: Have a secret diagnostic slide just for you.

Before the audience enters, I switch to my second slide. This one looks almost identical, but the green frame is gone, and it contains an embedded audio track.

As people enter the hall, my music plays automatically from this slide (with the speaker icon hidden). This does two things. First, it sets the atmosphere I want, thus avoiding that awkward, dead-silent morgue feeling or relying on the organiser’s random playlist. Second, it shows that my laptop’s audio feed is working through the sound system.

Tip Two: Use your own music to test the laptop feed and control the atmosphere.

Finally, as I am being introduced, I use my remote clicker to advance to my third pre-slide.

To the audience, it looks the same as the previous one. But if you look closely at the bottom-left corner, there is a tiny green dot. If I click and the green dot appears, I know my remote is connected and the system hasn’t frozen. If it has frozen, I can speak for 10 minutes without slides while the problem is fixed (hopefully!).

There is nothing worse than building up audience anticipation, only to find out you can’t advance your first slide. With these quick, hidden checks, you’ll never have to worry about tech failures again.

Tip Three: Use the Green Dot Trick to test the system just before you start.

Last week I was walking along the street and recognised a student that I had taught many years ago. They saw me, waved, and shouted ‘Fresh Fish Sold Here Today.’ I was delighted. I use this phrase when I teach presentation skills and was amazed that they had remembered it years later.

The phrase comes from a great magic trick invented by Arnold Furst. A magician starts off with a strip of paper with the words FRESH FISH SOLD HERE TODAY printed along it. In my version, I explain that it’s a sign from a fishmonger’s window and point out that the word TODAY is redundant (when else would you be selling the fish?). I tear TODAY off the strip. Next, I point out that it’s obvious where the fish is being sold, and so I tear off HERE too. Then I point out that you don’t need the word SOLD because it’s obviously a shop, and tear SOLD off. Finally, I note that the fishmonger is obviously selling fish and so that word is torn off too. I am just left with the one word that really matters – FRESH!

In the trick, the pieces are then magically restored, but I don’t bother with that bit. Instead, I point out that the same principle applies to the text on slides. It’s important to be as efficient as possible. Whenever there is text on a slide, audiences start to read it, and so switch their attention from the speaker to the slide. To prevent this, cut any words to the absolute minimum. If it helps, imagine that you are paying £1000 for each word. What can go? In an ideal world, there are no words at all!

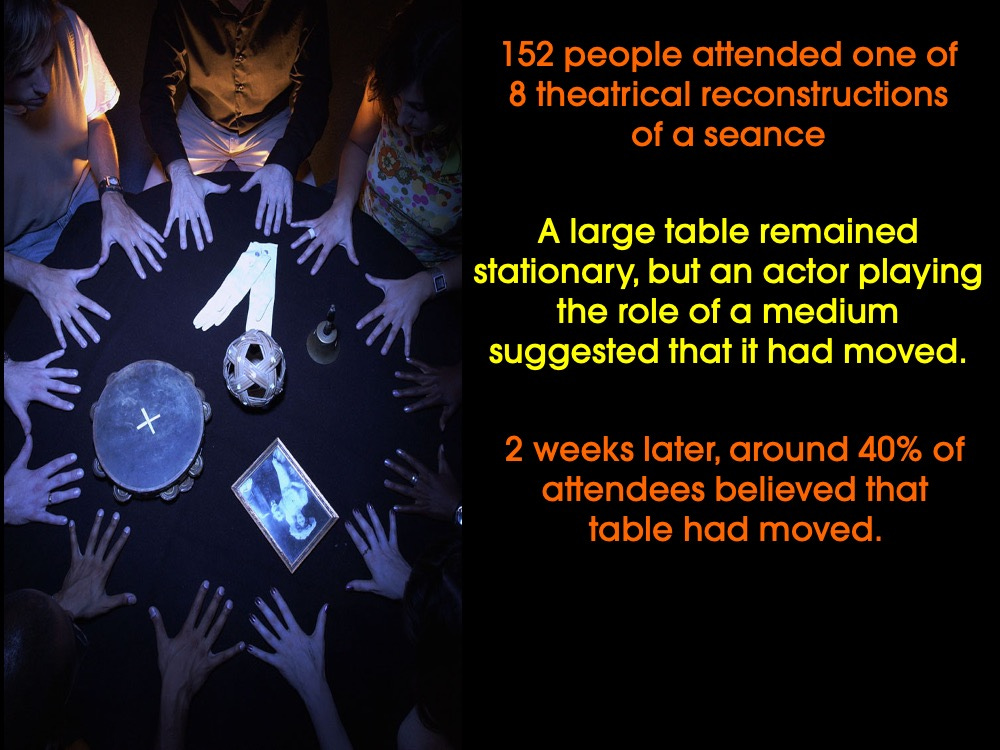

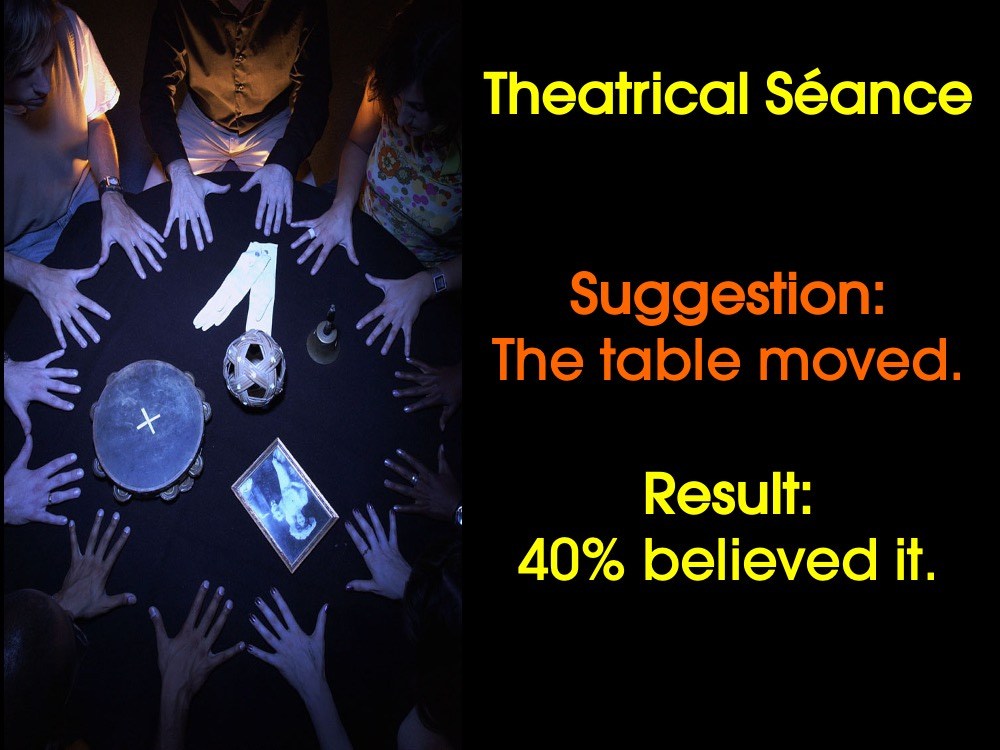

For instance, look at this early slide from one of my talks into the psychology of suggestion and séances….

It’s fine but loads of those words can go. Apply the FRESH FISH SOLD HERE TODAY rule and now it reads….

Look at your slides. If you had to pay £1000 per word, what would go?

Shop image produced by AI.

I Told You So! Scientists Who Were Ridiculed, Exiled, and Imprisoned for Being Right (St Martin’s Press) by Matt Kaplan

We like to think that science is guided by a noble ideal: evidence rules. When new data emerges, scientists revise their theories, abandon cherished ideas and move on. Except, of course, that this is largely nonsense. Science may aspire to objectivity, but it is practised by humans, people riddled with cognitive biases, egos, tribal instincts and professional anxieties.

It is in this messy, human space that Matt Kaplan’s I Told You So firmly plants itself. At the heart of the book is the tragic and infuriating story of Ignaz Semmelweis, the 19th-century physician who demonstrated that simple hand hygiene by medical staff dramatically reduced deaths from puerperal fever, an infection that was killing vast numbers of women after childbirth. Kaplan tells this story with empathy and narrative drive, capturing Semmelweis as a dogged and deeply principled figure. Yet he also makes clear that Semmelweis was uncomfortable with self-promotion and slow to package his findings in ways that his peers could, or would, accept. This was not simply a failure of evidence, but a failure of communication and culture. By weaving in the work and personalities of contemporaries, he vividly recreates a scientific community on the brink of understanding infection, yet stubbornly resistant to ideas that challenged entrenched hierarchies.

Kaplan portrays Semmelweis’s approach in sharp contrast to Louis Pasteur’s, who worked in adjacent areas of microbiology and vaccination during the same period. Pasteur emerges as an undeniable scientific giant and a gifted communicator, but also as someone deeply unethical in how he presented his work and marginalised competitors. Kaplan details how Pasteur rewrote the narratives of his discoveries to make them more compelling, freely appropriated others’ ideas without proper credit and used his growing fame to erase rivals from the story.

While Semmelweis provides the book’s backbone, Kaplan deftly segues into modern parallels. Threads from palaeontology, drug development and animal welfare show that the dynamics of exclusion and dismissal are far from historical curiosities. Running alongside the Semmelweis narrative is the contemporary story of Katalin Karikó, whose work on mRNA was ignored, ridiculed and repeatedly defunded. The applications of her work were not immediately obvious, nor was it fashionable, and so Karikó was turned down for funding, pushed out of prestigious research environments, demoted and belittled. Yet her years of experience and results eventually led to her joining BioNTech in 2013, and to the subsequent development of the mRNA vaccines that saved millions of lives and helped bring the Covid-19 pandemic under control.

Kaplan uses these stories to build a compelling and uncomfortable argument: science does not always advance by rewarding the best ideas, but too often by amplifying the loudest voices. As he writes, “science is rich with tales of those who were right but who had an exceptionally challenging explanation that they needed to communicate.” The lesson is not that all outsiders are correct, nor that consensus is inherently bad, but that healthy science requires humility, curiosity and better listening.

This is where Kaplan’s excellent book quietly becomes a manifesto for science communication. If scientific progress is to be driven by the best ideas rather than the sharpest elbows, then scientists and communicators alike have a responsibility to seek out those doing careful, unfashionable or poorly advertised work and help them be heard. Not everyone can, or should, communicate like Pasteur. Yet the fact that his version of events persists to this day, even when contradicted by his own laboratory notebooks, is perhaps the clearest demonstration of the enduring power of good storytelling in science.

This article is from New Humanist's Spring 2026 edition. Subscribe now.

Last summer, I finally visited Libya. Having worked as a freelance journalist throughout African countries for half a decade, I had wanted to visit Libya for years – not for the beaches or cuisine, but because I have long been drawn to the rough intrigues of North African politics, and the stories surrounding Muammar Gaddafi and his legacy. I also wanted to see the Roman, Phoenician and Greek relics that stand along the Libyan coastline, cut off from the global tourism industry.

What I discovered was a mesmerisingly beautiful but elusive country. To some degree, I knew what I was getting myself into. You can’t just go on holiday to Libya. I had to obtain a letter of invitation, two separate visas and a pre-paid personal security attachment for each side of the country, which is split between rival factions. I also had to solemnly swear to avoid any kind of journalism at any time, or risk being deported from the country.

This produced a level of paranoia I had not felt in any of the previous 37 countries I’ve visited in my long career. I doubt the government would approve of the notes that ended up feeding this piece, but I wanted to try to understand a country that holds such a peculiar place in the western imagination.

For Europeans, Libya is the closest edge of the African continent: a borderland imagined as both exotic and dangerous, a gateway through which migrants might surge north, or oil might flow west. For Americans, it is often thought of less as a nation of people than as a geopolitical riddle – from Gaddafi’s flamboyant dictatorship and the 1986 US bombing of Tripoli to the Benghazi consulate attack by a group aligned with Al-Qaeda, on 11 September 2012. Libya is a canvas onto which western powers project their fears, ambitions and fantasies.

When I touched down in Tripoli at the apex of summer, I had only a cursory knowledge of the country. I knew that Libya today is less a unified nation than a fractured state suspended between competing factions. It sits between Tunisia and Egypt on the Mediterranean’s southern rim, where for most of the 20th century it traded oil for stability. Then came the bloody fall of Muammar Gaddafi in 2011, following the Libyan civil war and Nato’s intervention, which did not, as was piously promised by intervening leaders, deliver Libya into democracy. Libya has since splintered into rival administrations – the internationally recognised government in Tripoli to the west, and the eastern stronghold under General Khalifa Haftar – each backed by a rotating cast of foreign patrons. Hence my two security details.

I was taken to the sanitised centre of the capital, Tripoli, and then to the breathtaking Graeco-Roman ruins scattered along the coast. My tour guides and I also visited the villages of Berber tribes that existed in North Africa long before its Arab conquest. But this was Libya as stage décor: the approved exhibits of a country that seems to fear its own backstage.

In Martyr’s Square, in the centre of the capital, I was delivered to the rows of gigantic Libyan flags towering over children devouring cotton candy, and families meandering between jewelry shops and cafés. My first guide, an older man with a scholar’s passion for archaeology, became a little less stiff when I joined him in his chain-smoking habit. My police escort soon lit up, too. As we drove through Tripoli, the contrasts revealed themselves: glittering hotels, largely empty, standing beside eroded apartment blocks perforated by bullet holes. Whole districts remain scarred from the 2011 conflict, when the uprising against Gaddafi turned the city into a sniper’s playground. Fifteen years later, Tripoli has not healed. I pointed to one scarred façade. “Oh, just some fighting between the militias,” my guide muttered, eager to steer the conversation toward anything else. “You know we have a very nice fish market here!”

The escort, slouched in the backseat, alternated between his phone and sleep. But he was there at every moment. Whenever I needed a light. When I had to cross the street. He even came along on a bathroom stop on the highway. Once, I had a late-night craving for a shawarma from a small bistro no more than a block from the hotel. He came with me, and we ate our spiced meat together.

I had entertained the faint hope that after each neatly choreographed stop, the three of us might slip the leash of our schedule and wander into the neighbourhoods where people actually lived, or the outskirts of the city. My requests were met with polite but firm refusal. I soon found that every step on my itinerary had to be pre-cleared, and that I would be shadowed by my guards – equal parts friendly and suspicious – at every turn.

But I could sense another Libya, beyond the curated facades, where the country’s contradictions thrive quietly. It is a country of tension and inequality. Oil wealth, which might have been a unifying resource for all Libyans, has today become a bargaining chip between militias, bureaucrats and opportunists, feeding a political economy that thrives on corruption and opacity.

And then there is religion. Libya shares some characteristics with other strict Islamic countries: the absence of alcohol, women covering up, commerce shutting on Fridays, and the constant use of religious verbiage: Inshallah (“God willing”), Alhamdulillah (“praise be to God”) or Bismillah, which is said to announce the start of any important action. To ingratiate myself I started to say the latter before each meal, as locals did, to the apparent admiration of my guide and police escort.

But Libya has its own particular brand of Islam. It’s overwhelmingly Sunni but historically rooted in Sufi traditions, particularly the Senussi movement – a puritan yet mystical brotherhood that blended tribal unity with moral restraint. This tradition once bound Libya’s deserts and tribes under a shared faith and even produced the country’s only monarch, King Idris.

Today, though religious freedom is nominally guaranteed, straying from orthodox Sunni Islam can lead to intimidation or persecution, especially in areas controlled by conservative militias. Depending on where you are in the country, the law is uneven and often dictated by local power brokers rather than any real constitution.

I got used to hearing the call for prayer, rising from minarets five times a today and broadcast across radio stations. While I didn’t see the morality police in action, I knew that in November 2024, the Libyan Interior Minister had reinstated this force, which patrols the public and enforces rules around “modest” clothing, and the requirement for women to be accompanied by male guardians when in public.

Men moved through the streets in a disciplined palette of black, grey, brown and sand-coloured thawbs and kanduras, traditional long robes that blurred each individual into a uniform silhouette. Near the restaurants and cafés in Tripoli, I spotted young Libyans wearing western clothes, or designer shirts and jeans, but nothing loud or flashy. I didn’t see many women, except for the occasional matriarch tucked away in the back corner of a restaurant with her family, making sure the children ate.

Yet even through the narrow window given to me, the country’s beauty shone through – the marble and ruins gleamed with a serenity that made me gasp aloud. The Arch of Marcus Aurelius in the Old City of Tripoli, erected to honour the Roman Emperor and his co-emperor Lucius Verus after their victories over the Parthians, is an extraordinary relic that has survived through centuries of conquest and urban transformation. It endures not because anyone cherished it, but because no one quite got around to tearing it down.

At the ancient coastal ruin of Apollonia, I saw the remains of a once-proud Greek port that outlived its makers and their gods, its walls pitted from grenade fire. My guide, my escort and I were the only people there. It was eerie, but beautiful. Archeological sites in Italy or Greece are often surrounded by snack shops, trinket sellers and tourist traps. In Libya there is nothing but the wind, the cry of birds and the faint whisper of the Mediterranean.

It’s a perverse irony that the post-conflict zone offers a form of tourism impossible elsewhere. Standing there, I could imagine what the ancients themselves might have heard, as if time had folded back on itself. Roman amphitheatres, detailed mosaics, coastlines that would be sought-after destinations elsewhere, all languish in silence.

Overall, my Libyan tour offered me as much valuable tourism as it did state theatre. The restrictions I went through as a tourist are a part of a larger policy of restricting free speech and the press, which includes preventing Libyans, as well as foreign journalists, from covering the challenges facing the country. One of these is the presence of migrants from Sub-Saharan Africa, who risk everything to cross Libya’s porous southern borders in the hope of reaching Europe, usually via Greece or Italy. The continual flow of these desperate people is a rebuke to the idea of Libya as a sealed and orderly state.

I was carried through a curated experience, permitted to see the ruins of antiquity but not the ruins of the present. Yet in the very act of concealment, Libya tells on itself. It reveals a nation desperate to project solidity, but in doing so shows only its divisions.

This article is from New Humanist's Spring 2026 edition. Subscribe now.

First, I have just launched a 3 min survey on magic and it would be wonderful if you could take part. The link is here.

Third, it’s puzzle time! In my last post, I posed this puzzle…

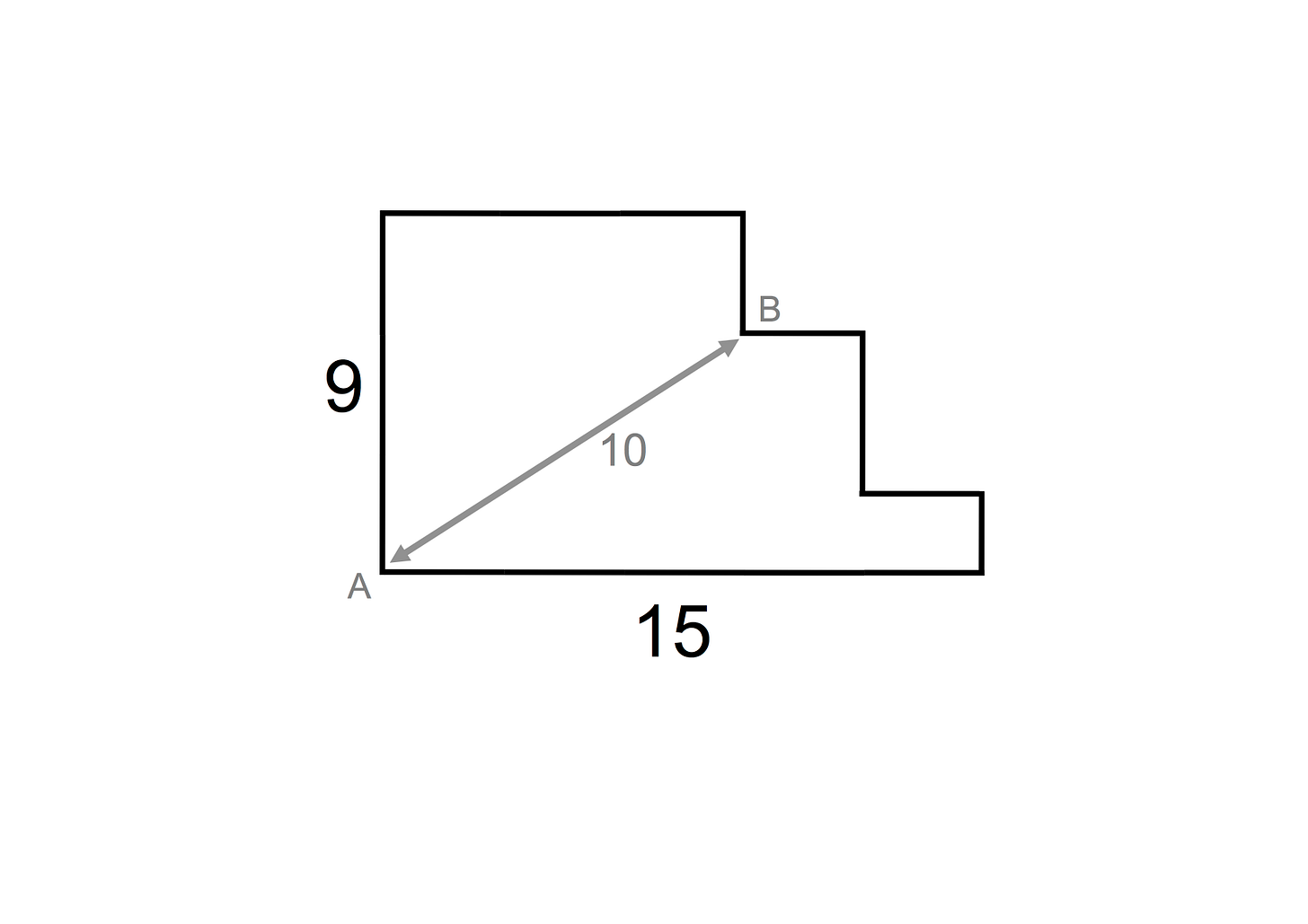

Take a look at this plan of a launchpad.

One side of the launchpad is 9 metres long and another side is 15 metres long, and the distance between point A and point B is 10 metres. You need to buy some fencing to all around the perimeter of the launchpad. You aren’t allowed to use a ruler, consult a book, or ask a friend.

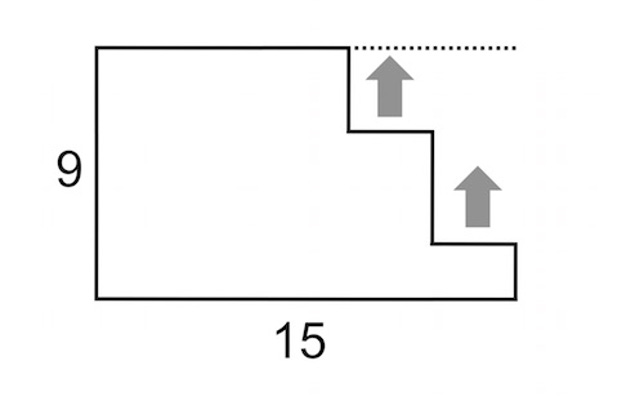

How did you get on? There’s a remarkably easy solution. You can ignore the distance between point A and point B. All you have to do is imagine moving these two horizontal lines up like this….

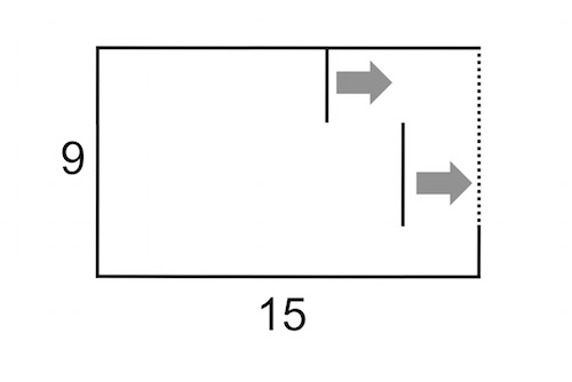

…..and these two vertical lines to the right like this…….

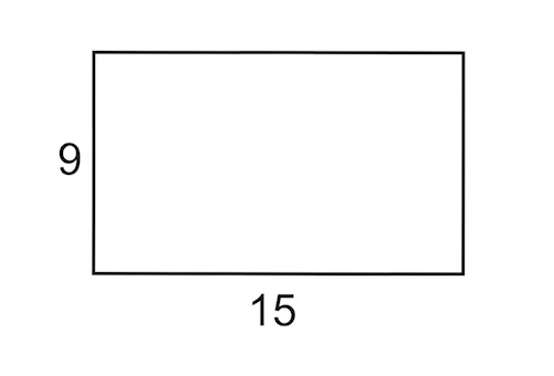

….it then you are left with a perfect rectangle like this…..

….and now it’s obvious that the launchpad’s perimeter is 48 metres (two sides that are 9 metres long plus two sides that are 15 metres long).

And here is one extra puzzle….

A magician says that they can throw a ping-pong ball and have it go a short distance, come to a complete stop, and then reverse itself. The magician says that he won’t bounce the ball against any object, or attach anything to it. How can he perform such an amazing feat?

The solution? The magician threw the ping pong ball up in the air.

How did you get on? Try them on your friends and annoy them too.

I am a huge fan of these lateral thinking projects and hope that you are too!

There was a time in late 50s, early 60s Paris when something was in the air. In just three years, 162 debut feature films emerged from a new generation of filmmakers, who created energetic, rule-breaking, joy-filled cinema, populated by young men and women running through the streets, filming on the hoof with their new lightweight cameras. They called it the nouvelle vague – the French New Wave. Richard Linklater’s black-and-white film Nouvelle Vague is a loving tribute to that scene. It follows the making of one of the era’s masterpieces: Jean-Luc Godard’s A Bout De Souffle, or Breathless for English-speaking audiences.

Each time we meet a new figure, there is a moment when they look to camera and an onscreen caption introduces them to us: Francois Truffaut, Agnes Varda, Claude Chabrol. The sheer number of names is astounding. Many of these talents were friends, who first emerged as writers for the new Cahiers Du Cinema magazine. And there is inevitably some nostalgia, watching Linklater’s film, for that lost era when physical magazines thrived, and living in the heart of a great capital city and pursuing your creative ambition was possible without family money. But what Nouvelle Vague really captures is the excitement of a new world built on talent and the desire to make great art.

The nouvelle vague changed world culture, not just cinema. The director Richard Lester took inspiration from it to capture what his Hollywood producers assumed was a passing pop fad. The resulting film, Quatre Garcons Dans Le Vent (Four Boys in the Wind) – or A Hard Day’s Night as we know it – helped The Beatles conquer the world.

The impact of the nouvelle vague movement was on my mind when I recently attended the British Screen Forum’s annual conference – a gathering of film and television industry creatives. With television and filmmaking in decline, there was eager discussion about how far internet influencers have opened up a screen alternative. Influencers create, film, edit and upload their material to the likes of YouTube, X, Instagram, Twitch and TikTok. In one way what they do is comparable to Truffaut and his friends – grasping the possibilities of the new technology to connect directly with audiences of their own generation.

YouTuber Jacob Collier has performed at the Proms as well as releasing acclaimed albums. Women and people of colour have benefited from being able to bypass traditionally biased gatekeeping by entertainment executives. There are many comedians who launched their careers posting online content, such as Mo Gilligan and, in the Covid lockdown, Rosie Holt and Munya Chawawa.

Meanwhile, others have shattered the old boundaries on entertainment formats, like Tommy Innit (real name Thomas Simons) who began in 2018 with video-game streams and filming his own adventures. He now has more than 27 million subscribers to his YouTube and Twitch channels. There’s a natural charm and wit to Tommy’s style – he’s progressed to live comedy and podcasting, and he’s used his profile to tackle misogyny among young men.

Such young talents seem to be very much in the spirit of the nouvelle vague. But back at the British Screen Forum, the panel discussion I watched featured no one like these names. Rather we met young “creators” with big social media followings who self-promote around topics like wellbeing or travel or entrepreneurship. Other than a few jobs for struggling studio technicians and stylists, what, I wondered were such commercially focused figures really creating? Especially when the goal seemed nothing more than to tie up with a major brand as soon as possible.

The French new wave made art driven by love and ambition. Money was a benefit, but it wasn’t the prime motivation. That attitude can seem like a luxury in our time. But one thing still rings true: art made to be true art, rather than merely revenue-generating “content”, is what people will still be watching decades from now.

This article is from New Humanist's Spring 2026 edition. Subscribe now.

Artemis II has now made its way safely into space and many people have asked if I am related to its commander, Reid Wiseman. This isn’t the case, although I am prepared to step in and take charge of the mission if needed. However, I have written a book about the mindset that took humanity to the Moon and had the privilege of interviewing many of the Mission Controllers involved in Apollo 11 (huge thanks to Helen Keen and Craig Scott for making this happen). At the time of this historic event, they were an amazingly young bunch. Indeed, when Neil Armstrong walked on the Moon, the average age of the Mission Controllers was an astonishing 28 years old.

Here are three of my favourite learnings based around memorable quotes….

The power of optimism

Jerry Bostick grew up on a small farm in Mississippi, graduated with a degree in Civil Engineering and eventually ended up working at the NASA. Bostick led the group that ensured Apollo 11 went in the right direction (known as Mission Control’s Flight Dynamics Branch). When I interviewed Bostick, he made a great comment on the importance of the Mission Controllers being a young, optimistic, bunch:

“They decided to go with a bunch of young guys fresh out of college because we didn’t know that it couldn’t be done! When we were told that we needed to find a way of getting to the Moon, we just got on and did it.”

I have often thought about Bostick’s ‘we were so young we didn’t know it couldn’t be done’ comment. We can all embrace the optimism of youth. Don’t give up before you have begun. Instead, assume there is a way forward and start to try to find solutions.

Personal responsibility

Apollo astronaut Ken Mattingly would go to the launch pad and look at the giant Saturn V rocket. Once, he went into a large room packed with electronics and chatted with a technician about the risks involved in the mission. The technician said that he no idea about how many parts of the rocket worked, and Mattingly became concerned. However, the engineer explained that it was his job to ensure that the electronics inside a single panel were in working order, and assured Mattingly that when it came to that panel, the project wouldn’t fail because of him.

Mattingly realized that the complex Apollo missions were successful, in part, because everyone had the same sense of personal responsibility, and that was summed up in a single sentence: It won’t fail because of me.

It’s a simple but powerful idea. If you say that you are going to do something, do it. Don’t procrastinate, pass the buck, or cut corners. Be conscientious and make your word your bond. Be fully accountable for what you do and what you don’t do.

Fear and risk taking

Space travel is risky and even the smallest mistake can prove fatal. Flight Director Glynn Lunney noticed that people involved in the Apollo missions often ran away from these risks by holding yet another meeting or other delaying tactic. He understood that if the mission were to become a reality, at some point they were going to have to take risks. He summed up his thoughts in one sentence: If you’re going to go to the Moon, sooner or later you’ve got to go to the Moon.

It is a great mantra. At certain points in our lives, we need to find the courage to face fear in the hope of bringing about a better future. This means being action-based, taking risks (without being reckless), and focusing on overcoming potential problems. This action-based approach has two advantages. First, you learn by doing. Instead of talking the talk, you roll up your sleeves and get on with it, and so develop the skills needed to make your plans a reality. Second, by putting yourself out there, you increase the likelihood of meeting other like-minded people and coming across unexpected opportunities.

I wrote all this up (and much more) in my book, Shoot for the Moon, which looks at the new psychology of success through the lens of the Apollo 11 mission. Thanks for reading.

Climate, 14th century: The characteristic weather conditions of a country or region

In 1854, when the United States Magazine of Science, Art, Manufactures, Agriculture, Commerce and Trade used the phrase “climate changes”, they couldn’t have imagined that this would be one of the most pressing matters facing the human race in 2026. And yet the phrase was used like this: “Some have ascribed these climate changes to agriculture – cutting down the dense forests – the exposure of the upturned soil to the summer sun, and the draining of the great marshes.” So they were on to it even then.

The word “climate” first started being used in English in the 14th century as a borrowing from French and Latin. At the time, it was thought that the Earth had seven climate zones, each one determined astrologically. By 1400 or so, Sir John Mandeville, in his famous Travels, was explaining that the people of India were in “the first climate, that is of Saturn”, while the English climate, he said, was determined by the Moon. It was science, but not as we know it.

Of course, the word “climate” is often used figuratively, like when we talk about the “moral climate” or “economic climate”. According to The Oxford English Dictionary, this first occurred as early as 1661 with the phrase “climate of opinion”, a usage that wouldn’t sound out of place in a current Times editorial. But in uses of the word pertaining to the environment, the appearances of particular phrases tell a history all of their own.

“Climate action” and “climate emergency” both appeared in 1989. Seven years later, in 1996, “climate denial” and “climate sceptic” were first used. It took another few years for people to be labelled as “climate deniers” (2003) but, in opposition to these deniers, in 2014, along comes “climate strike”.

The word is likely to remain a battleground over the next few years. On the one hand, we’ll use it neutrally, to say what the weather is like. On the other hand, it will no doubt remain at the centre of some of the big struggles of the 21st century.

This article is from our Spring 2026 edition. Subscribe now.

Becoming George: The Invention of George Sand (Transworld) by Fiona Sampson

The title of poet and scholar Fiona Sampson’s latest biography denotes several journeys in the life of one of France’s first great women writers, George Sand. Starting with her childhood in rural Nohant in the aftermath of the French Revolution, it follows her to Paris where she takes part in some of the upheavals that followed, taking in her advocacy for women’s rights and against marriage, her self-discovery as a writer, her turbulent relationships with Alfred de Musset and Fryderyk Chopin, the public scandals caused in part by her adoption of masculine clothing, and by her use of a male nom de plume. To become George Sand, writes Sampson in her introduction to a writer no longer as widely read in the UK as many of her male contemporaries, required “will, imagination, chutzpah”.

Charting Sand’s life through the development of mass politics and rapid urbanisation, Sampson bookmarks significant shifts in Sand’s life by spending a few pages between each chapter analysing an image of her subject. As Sand lived from 1804 to 1876, and moved throughout her life in bourgeois circles, she was often captured not just by portrait painters, but also by photographers.

The first “impression” provides circularity, as it catches Sand with her granddaughters, just a year before her death, at the country house where she grew up. This allows Sampson to explore just how much France changed during Sand’s lifetime, positing Sand as a “bridge figure”. In the 18th century, writes Sampson, the likes of Bach, Goethe, Rousseau or Voltaire could shift European culture while working outside their capital cities, whereas Sand had to go to Paris to mix in literary and musical circles, at least for the prime years of her astonishingly productive career.

Sampson spends her first four chapters on Sand’s childhood as Aurore Dupin, establishing the formative nature of her parents’ cross-class relationship, her father’s early death after being thrown from his horse, her being raised largely by her grandmother, and the importance of Nohant as a location throughout her life.

Inevitably, the pace picks up once we move to Paris in 1831, when Sand chose her famous pseudonym – and successfully applied for a permit to wear men’s clothing in public, which was issued by the police at the time. (This permission did not stop newspapers or literary journals from making unkind comments about her lack of femininity, even if the concept of “transgender” was a century from existence, and friends as prominent as Victor Hugo said the matter of whether Sand was “my sister or my brother” did not concern him.)

Sampson, writing in an age of frenzied discussion about gender identity, wisely does not spend much time on the question of whether Sand was a proto-trans pioneer. Assessing Paul Gavarni’s drawing of Sand in male attire, produced for a gossip column in 1831 or 1832, Sampson quotes a letter from 1835 in which Sand writes, “I claim to possess, today and forever, the superb and complete independence which [men] alone believe [they] have the right to enjoy.” It was men’s privileges that Sand wanted, according to Sampson, not a male identity – at a time before the law, and sexologists, defined “the homosexual” or “the transvestite” as types.

Becoming George is at its most interesting when asking what it means to be an author – and what it means to write a biography. “Most of the ceaselessly branching alternatives and decisions that make up a life evade reconstruction,” writes Sampson at the beginning of chapter five, “Becoming a writer”. When discussing authors, this difficulty is compounded by the fact that the thing important writers spend most of their days doing – writing – does not have the same kind of technical interaction with a medium as painting or music, and tends to be solitary. But Sand was more sociable than many, and had complicated, cross-country affairs with famous composers and poets that gave plenty of material to future biographers and writers. (Notably, the German Expressionist dramatist Georg Kaiser’s 1922 play Flight to Venice imagined Sand’s relationship with fellow writer de Musset as a shared quest for artistic renewal.)

Sampson explores perennial questions for creative women, asking how Sand dealt with sexism, with men telling her “Don’t make books, make children”; the difficulty of pursuing a career while raising a family, with Sand prioritising her work, and her relationship with her daughter suffering as a result; and how her relationship with Chopin had to end because it was suffocating her ability to write. It’s full of insight that could only be reached by a biographer’s shared experience: “The most difficult step in becoming a published writer isn’t what you do with the blank page [but] what you do with the filled one,” writes Sampson, reflecting on how a person’s mid-twenties can be “when time passing begins to measure not progress but the stalling of some original trajectory”. This anxiety drives Sand to a phenomenal output – 70 novels and more than 50 other published works.

Naturally, an oeuvre so large is somewhat uneven, and Sampson charts the economic, social and political pressures for Sand to be so prolific, even if not every text can be analysed in detail in her 350 pages. Though Sampson occasionally overplays contemporary parallels (in lines such as “enjoying the kind of good life often featured in twenty-first-century lifestyle magazines”), this is a highly readable, subtly inventive book that argues for Sand’s importance not just as a writer but as a cultural figure.

Sampson closes with famous Paris photographer Félix Nadar’s portrait of Sand dressed as the satirical playwright Molière, which is “not of a man or woman, nor even of a woman dressed as a man, but of literary authority itself”. It reminds us that Sand is synonymous with the 19th century, France and the extraordinary written culture of that time and place – and that this remains the most important context within which to judge Sand’s life and work.

This article is from our Spring 2026 edition. Subscribe now.

I have recently received emails about my work into the pace of life, and so I thought that it would be a good time to reflect on the topic.

I’ve always been fascinated by the work of the late, great psychologist Robert Levine. In the early ‘90s, Robert pioneered a brilliant way to measure the pace of life in cities by secretly timing how fast pedestrians walked.

His findings were startling. People in Western Europe were sprinting through life, while those in Africa and Latin America took a more measured pace. Within the US, New Yorkers were the speed-demons, while Los Angeles leaned into a slower groove.

But this wasn’t just about getting to lunch on time. Levine discovered that a faster pace of life was a double-edged sword. Higher speeds were linked to higher income and increased happiness, but also more coronary heart disease and a decrease in helpfulness.

In 2006, I teamed up with British Council to measure walking speeds across the world. On August 22, our research teams went into city centres and found busy streets that were flat, free from obstacles, and uncrowded. Between 11.30am and 2.00pm local time, they secretly timed how long it took people to walk along a 60-foot stretch of the pavement. All the people had to be on their own, not holding a telephone conversation or struggling with shopping bags. The results are shown in the table below.

City (Country)

Fastest

1 Singapore (Singapore)

2 Copenhagen (Denmark)

3 Madrid (Spain)

4 Guangzhou (China)

5 Dublin (Ireland)

6 Curitiba (Brazil)

7 Berlin (Germany)

8 New York (USA)

9 Utrecht (Netherlands)

10 Vienna (Austria)

11 Warsaw (Poland)

12 London (UK)

13 Zagreb (Croatia)

14 Prague (Czech Republic)

15 Wellington (New Zealand)

16 Paris (France)

17 Stockholm (Sweden)

18 Ljubljana (Slovenia)

19 Tokyo (Japan)

20 Ottawa (Canada)

21 Harare (Zimbabwe)

22 Sofia (Bulgaria)

23 Taipei (Taiwan)

24 Cairo (Egypt)

25 Sana’a (Yemen)

26 Bucharest (Romania)

27 Dubai (UAE)

28 Damascus (Syria)

29 Amman (Jordan)

30 Bern (Switzerland)

31 Manama (Bahrain)

32 Blantyre (Malawi)

SLOWEST

We compared the 16 cities that were in Levine’s work and our own and determined that the pace of life had increased by 10%! The pace of life in Guangzhou (China) increased by over 20%, and Singapore showed a 30% increase, resulting in it becoming the fastest moving city in the study. Projected forward, the results suggest that by 2040, they will arrive at their destination several seconds before they have set-off.

A few years ago, I had the privilege of interviewing Robert Levine about this work. I believe it may have been his final interview. You can listen to him discussing the “Geography of Time” below.

Finally, what is your pace of life? Are you moving too fast? Here are four questions to help you to decide.

– When someone takes too long to get to the point, do you feel like hurrying them along?

– Are you often the first person to finish at mealtimes?

– When walking along a street, do you often feel frustrated because you are stuck behind others?

– Do you walk out of restaurants or shops if you encounter even a short queue?

Subscribe

Subscribe OPML

OPML